On a project where I was consulting senior product leadership at a large global financial institution, we kept hearing: “this feature will drive impact” — or worse, “sales needs this.” But nobody could answer the simplest follow-up: “Impact on what, measured how, and by when?”

All features were equally “needed” and there was a shopping cart filled with them.

In agreement with the head of product we ran a workshop to address that.

The goal: take a broad company mission (“Help advisors help more people”) and force it into measurable, testable statements—north star metrics, leading indicators, and a release measurement plan.

In this blog post I will describe the prompts we used to get there, the agenda and some facilitation tricks.

What we were trying to accomplish

The prompts (agenda & worksheet) we used were intentionally blunt:

We first asked:

“Our goal is ‘Help advisors help more people’. What are our north star metrics?”

Followed immediately with:

“What are leading indicators that show progress towards the north star(s)?”

To then frame the feature sets in a hypothesis format:

“We believe that {building XYZ} for {user segment} addresses their {user need / pain} and will achieve {outcome}. And we will know we are on the right track when we measure {leading indicator}.”

And finally reframe everything at launch time:

“We’re launching feature … How will we know that our feature is driving the relevant leading indicators of success?”

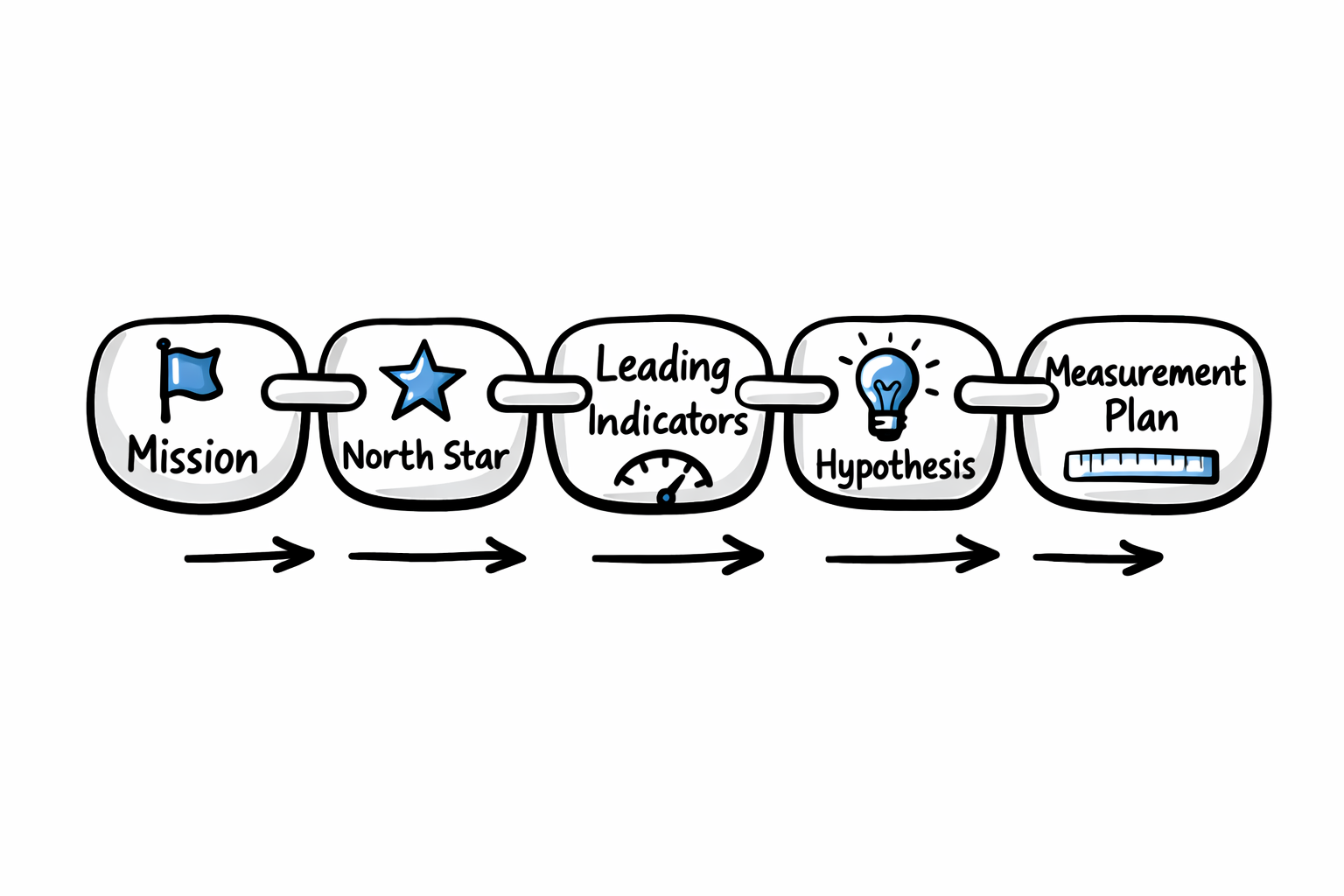

The purpose wasn’t “pick some KPIs.” It was to build a shared chain of logic:

mission → north star → leading indicators → feature hypotheses → measurement plan.

If you can’t draw that line, you’re guessing.

The setup

The setup

For over 12 months I was embedded at the institution as a full-time product lead consultant, reporting to the senior director of product. The product leadership team—by their own admission—was operating off a shopping list. No shared definition of success.

We ran a few online sessions to set the stage, I spoke 1:1 with participants, and then the institution flew the product leads from across the US to its central office for a face-to-face workshop.

In the room: the institution’s divisional head of product and 7 product leads (each managing other product managers). The online sessions had covered impact vs effort, hypothesis-driven decision making, and avoiding app customization. Now we needed to align the overall north star metric with individual initiatives across all the leads.

How I ran it (and why the timing matters)

The agenda was a tight, structured sequence—about 85 minutes end to end, including share-backs:

- Welcome + recap (10 min): quick grounding in what we learned from prior sessions.

- North stars (5 min): define candidate north star metrics for “Help advisors help more people.”

- Leading indicators (15 min total): individual thinking, pair sharing, then breakout to converge.

- Share-back (15 min): groups report their top picks.

- In practice (20 min): apply the logic to a concrete initiative and outline rollout + measurement.

- Compare approaches (20 min): share plans, interrogate assumptions.

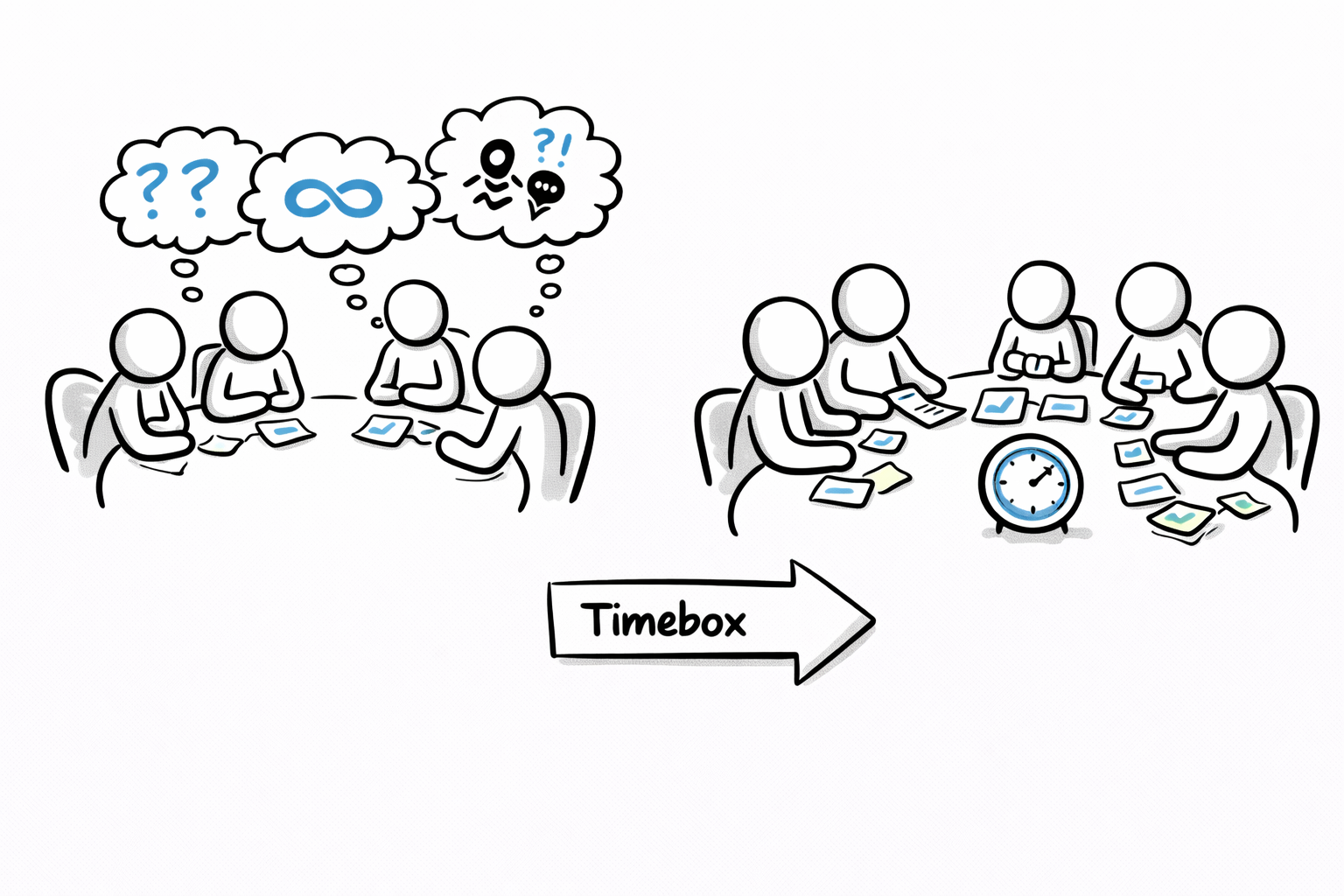

A facilitation note: short segments are a feature, not a bug. When you give teams unlimited time on metrics, you often get philosophy debates. Timeboxing pushes people toward operational definitions (“what would we actually measure?”), which is where the value is. And when that can’t be answered: “What’s the next action item we can take to get there?”.

To set the mood I played soft chill music at the very start, during solo activities, and at wrap-up. Small thing, but it signals “this isn’t a status meeting.”

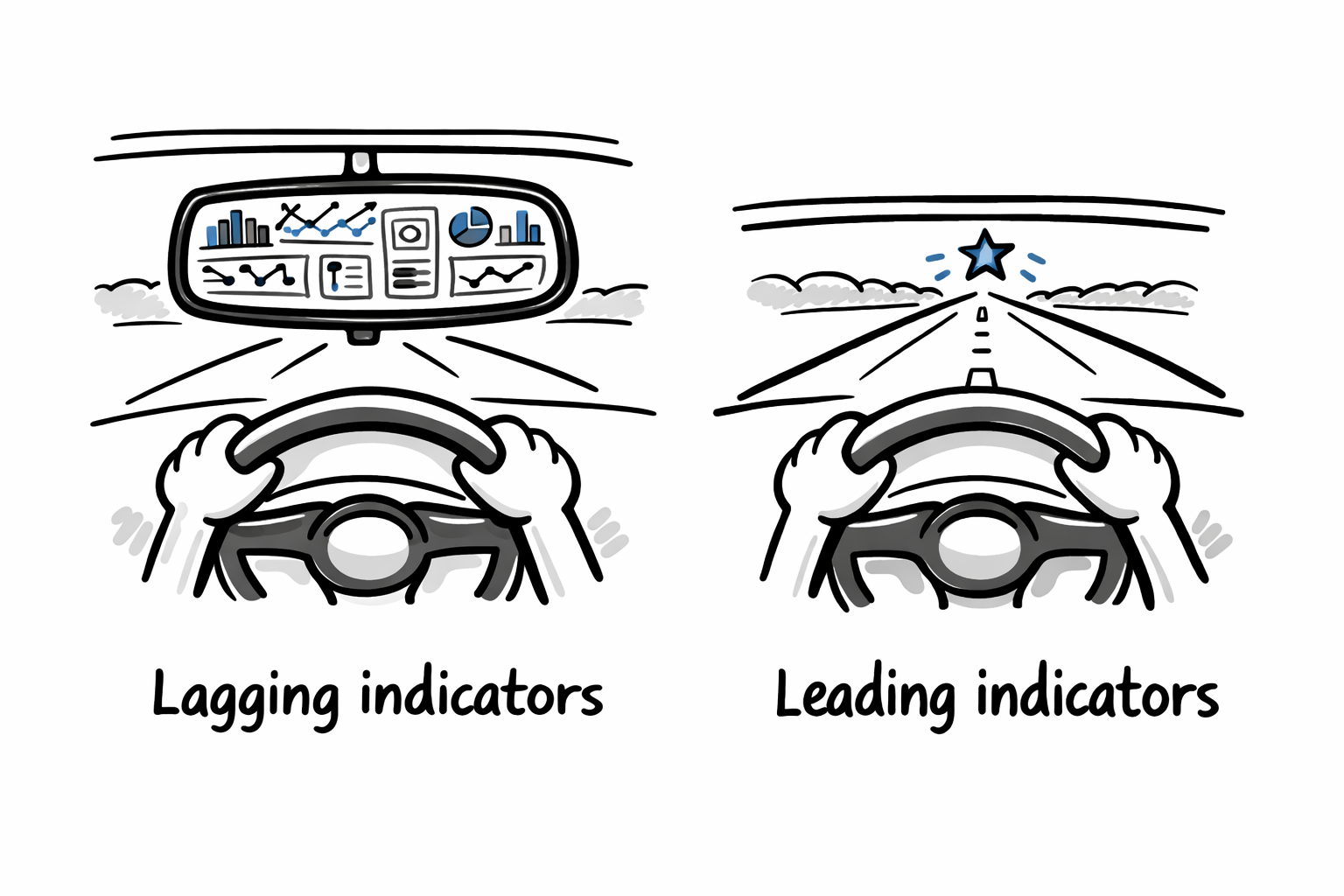

What we covered: From lagging “score” to leading “steering”

A few prompts from the agenda were deliberately pointed:

- “When we heard from your reports… we noticed no one had a clear measure for what ‘Impact’ means.”

- “PMs don’t seem to have a clear benchmark for knowing if a given feature is successful, nor how to prioritize what’s next.”

That set the tone: we weren’t there to admire the mission statement—we were there to make it measurable. The most significant shift happened when we moved from lagging business goals (the score) to leading product metrics (the steering wheel).

The Strategic Shift

Note: I’ve kept the high-level logic intact, but I’ve obfuscated specific internal metrics and insights to respect client confidentiality.

Overall Mission

- Before (Lagging): Increase AUM (Assets Under Management)

- After (Leading): Financial plans generated per week

- Instrumentation Priority: Track “Plan Created” events in app xyz.

Trading App

- Before (Lagging): Increase trade volume

- After (Leading): Time to complete a rebalance cycle

- Instrumentation Priority: Track click-to-completion time.

Proposal Journey

- Before (Lagging): Customer retention

- After (Leading): Prospect-to-Client conversion time

- Instrumentation Priority: Funnel event tagging.

Investor Portal

- Before (Lagging): Annual satisfaction score

- After (Leading): Monthly active users (MAU)

- Instrumentation Priority: Session frequency tracking.

Warning: If your only measures are lagging (revenue, retention six months later), you’ve built a dashboard, not a steering wheel.

Outcomes: what teams walked away with

The explicit workshop deliverable was: a prioritized set of leading indicators and a plan to measure them for a real feature rollout. More importantly, the group practiced a repeatable pattern:

- State the mission in plain language (we used “Help advisors help more people”).

- Proposed north stars. For example: “Number of financial plans generated per week”

- Define leading indicators (what moves before the north star moves, i.e. Time to complete the first discovery intake form).

- Convert feature ideas into hypotheses using the template.

- For a specific initiative, answer: how will we know this release is successful?

What worked well (and why)

Planning the workshop. The 1:1 pre-workshop sessions to understand the product leads contexts allowed to frame a purpose and outcome that made the participants understand we’re all in this together and kept them all engaged. Additionally, strict timeboxing—buffered with intentional padding—ensured we wrapped up on time.

The worksheet forced precision. The hypothesis format is simple, but it prevents mixing features (“add a dashboard”) with outcomes (“reduce advisor busywork”). The presence of the right people in the room (including the head of product) gave the group confidence this wasn’t just a futile exercise.

The “instrumented app” assumption kept us honest. In the agenda, the practice section explicitly assumes the product is fully instrumented. This exposes the gap immediately: if you can’t measure success, your first “feature” must be analytics plumbing. When teams inevitably argued, “but we don’t have that data,” the workshop outcome shifted from ‘build the feature’ to ‘build the telemetry.’ We treated instrumentation as the prerequisite, not the after-thought.

Comparing rollout plans created shared standards. When different groups present how they’d measure a release, you start to see patterns—what’s rigorous vs. what’s wishful thinking.

Tradeoffs and when this doesn’t work

- Vanity North Stars: If leadership wants a single metric for everything, you’ll end up with a vanity number.

- Gaming Proxies: Leading indicators can become proxies that teams game (e.g., optimizing onboarding prompts instead of real value).

- Data Model Gaps: You can’t measure what your data model doesn’t capture. Some teams will need a follow-up session on instrumentation.

If you want to run this workshop yourself

- Prepare & plan well before hand.

- Bring one real initiative per group. Concrete examples like “trading app” or “proposal journey” are perfect. Do not bring toy example projects not applicable to senior leaders with busy schedules. And especially Do not craft toy examples during the workshop.

- Ruthless timeboxing while helping the group hang in there and move trough

- Acknowledge when parking lot and follow ups will be necessary after the session.

- Schedule a 30-minute follow-up. Metrics without ownership rot fast.

I’ve included the exact PDF assets we used so you don’t have to design the logic flow from scratch: