In short:

A client shipped an 80-prompt LLM pipeline for extracting data from commercial real estate PDFs. No evals, no audit trail, no dashboards. By month five, operators were archiving 70-80% of the AI’s output.

The fix wasn’t a better model. It was instrumenting the system: dashboards that segmented error rates by field, an audit trail on human corrections, deterministic warnings that replaced a failing AI QA step, and a prompt architecture that let the team iterate on one field without breaking the other ten.

The post walks through six interventions, with archival data, correction breakdowns, and the prompt architecture before and after.

Note: I’ve kept the high-level logic intact, but I’ve obfuscated specific internal metrics and insights to respect project confidentiality.

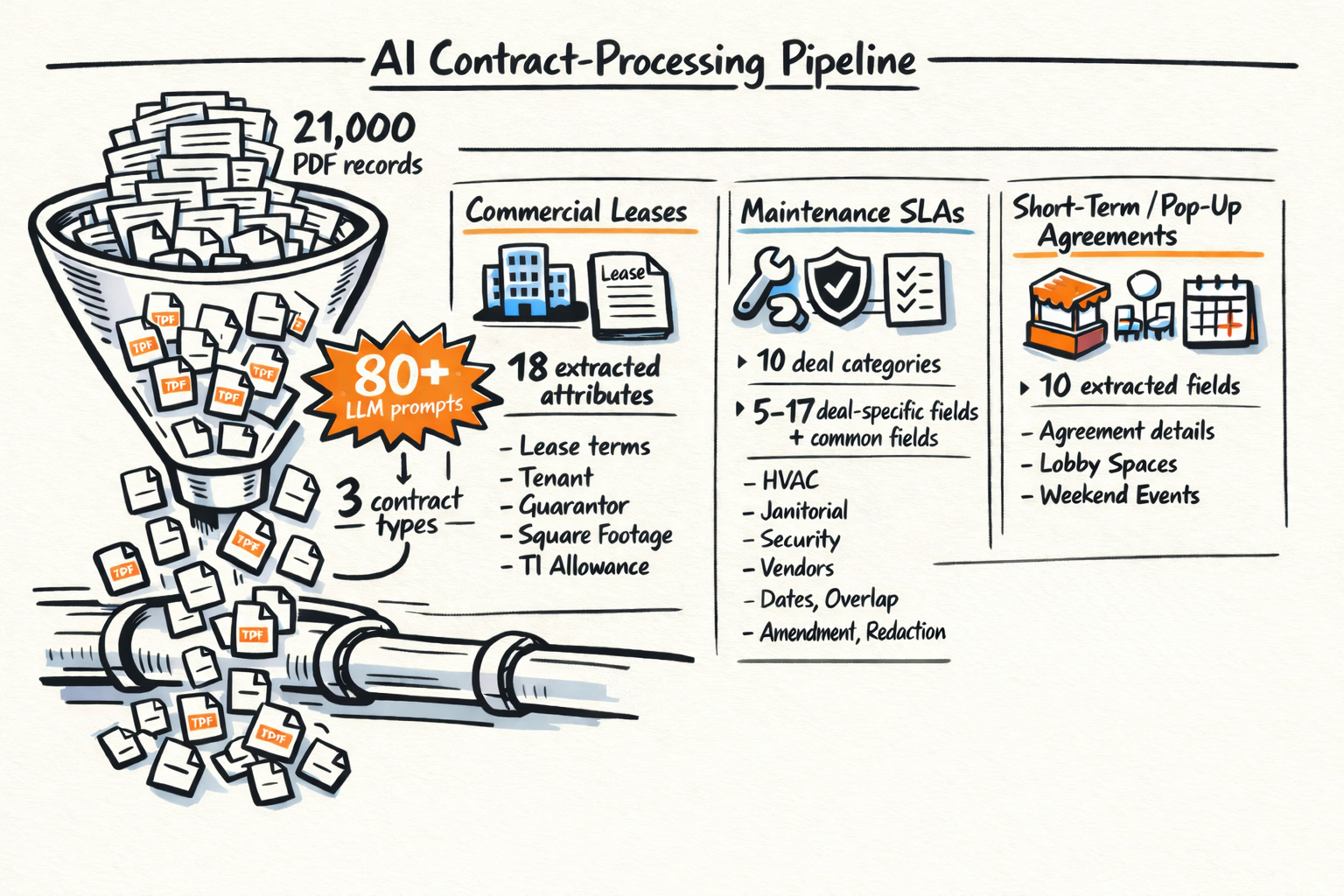

A client had built an LLM-powered pipeline to extract structured data from commercial real estate PDFs. These weren’t standardized forms—they were custom commercial leases, maintenance SLAs, and short-term pop-up agreements written by dozens of different property managers. The system used 80+ LLM prompts to turn that unstructured legal text into a clean database, replacing a team of 30 temp workers who previously spent three months each year reading them and typing the data in to the system.

The LLM pipeline was built with no evals. No traceability on LLM calls. No audit trail on human overrides. No dashboards. The only signal on accuracy was anecdotal: operator reports and system downtime.

I was brought in by the CEO as a consultant to understand the lay of the land. The first question — how bad is it? — had no answer, because nothing was measured.

What shipped

The data ingestion pipeline handled three contract types and, from June 2025 through March 2026, ingested 21,000 PDF records. Across those workflows, it ran more than 80 LLM prompts.

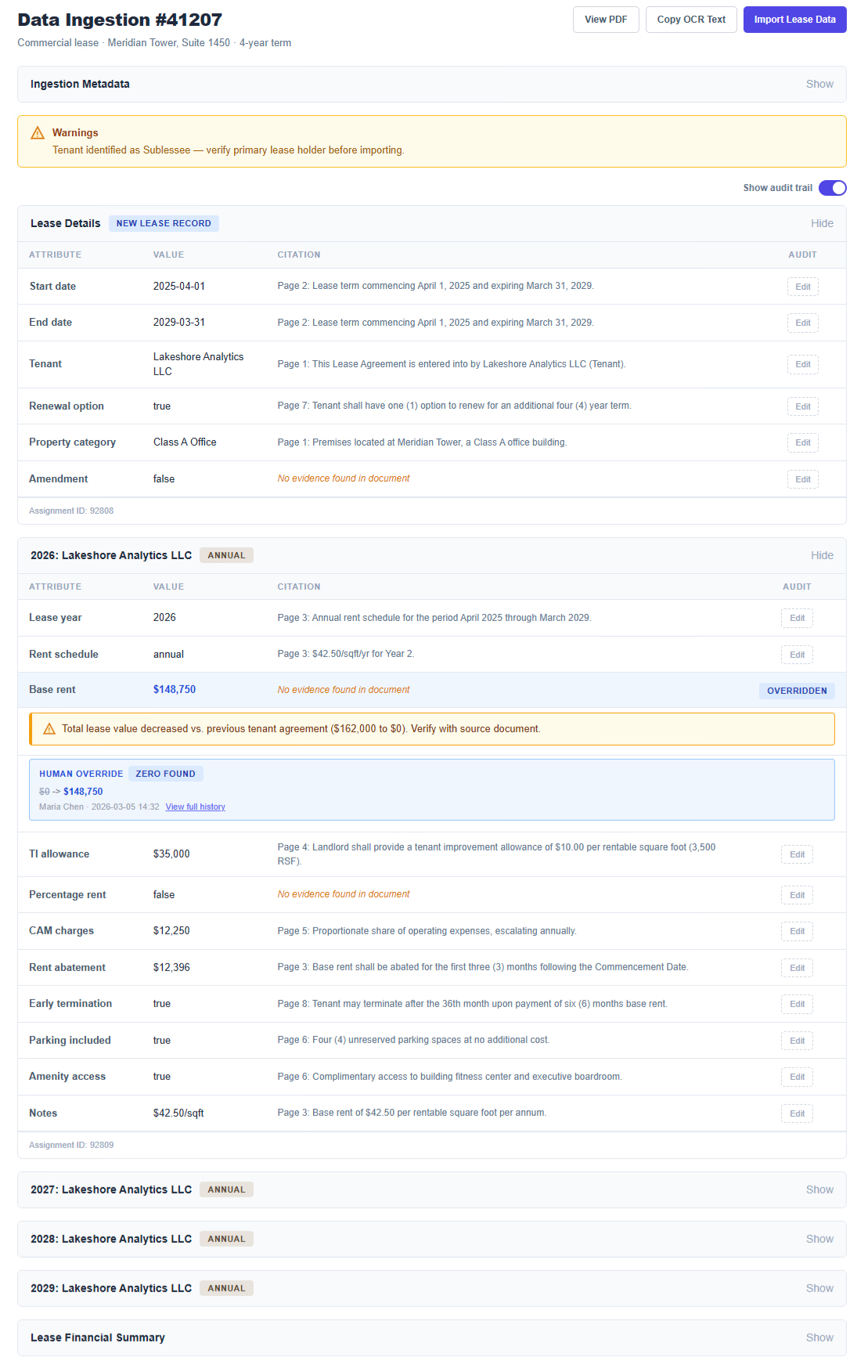

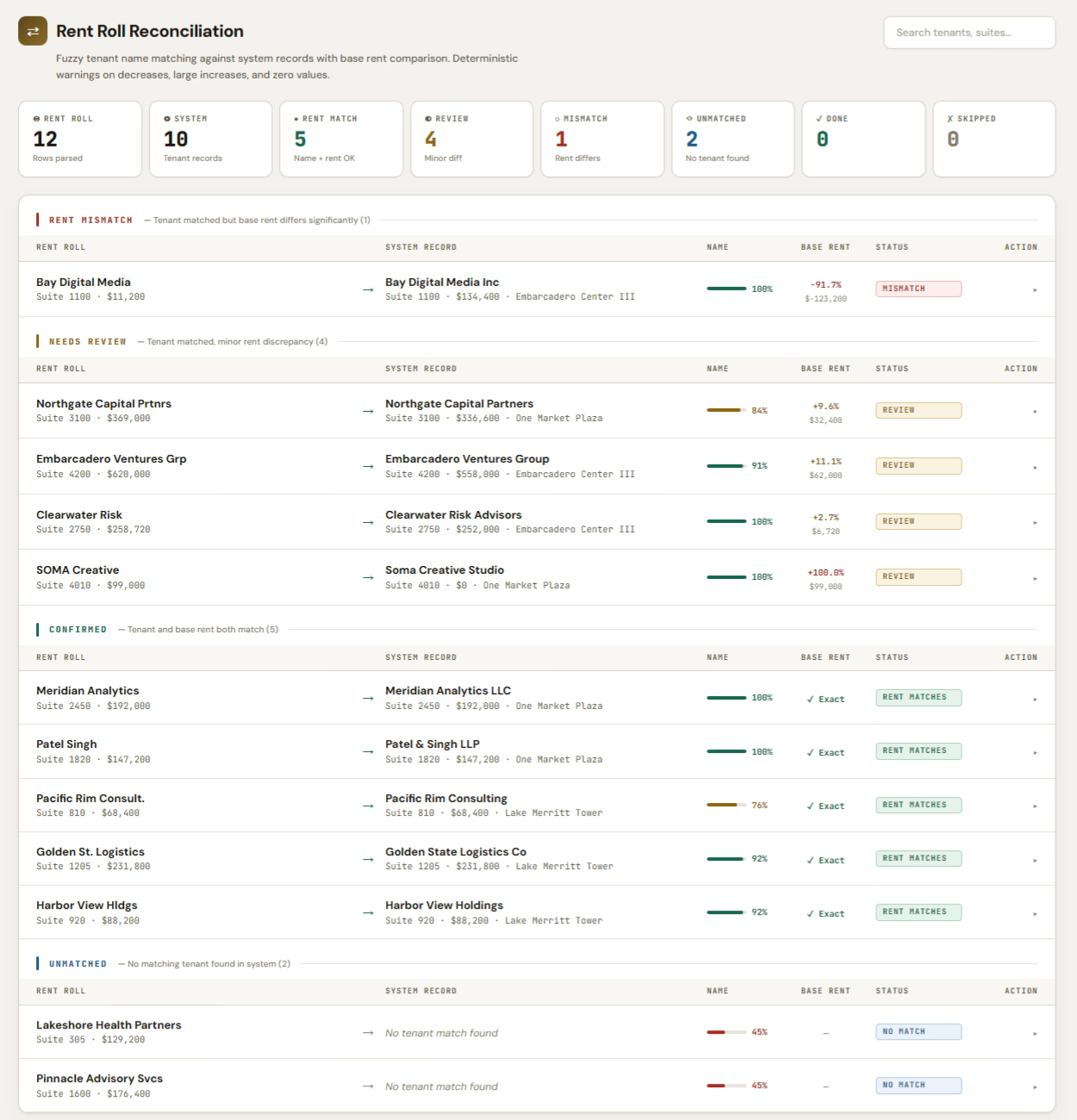

- Commercial Leases: 18 extracted attributes per document: lease terms, tenant identification, and financial fields. A subtype, rent rolls, listed hundreds of tenants and rents in a single PDF.

- Maintenance SLAs: 10 deal categories, each with its own schema, pulling 5 to 17 deal-specific fields plus shared fields.

- Short-Term/Pop-Up Agreements: 10 extracted fields: five for the agreement, five for each space or event.

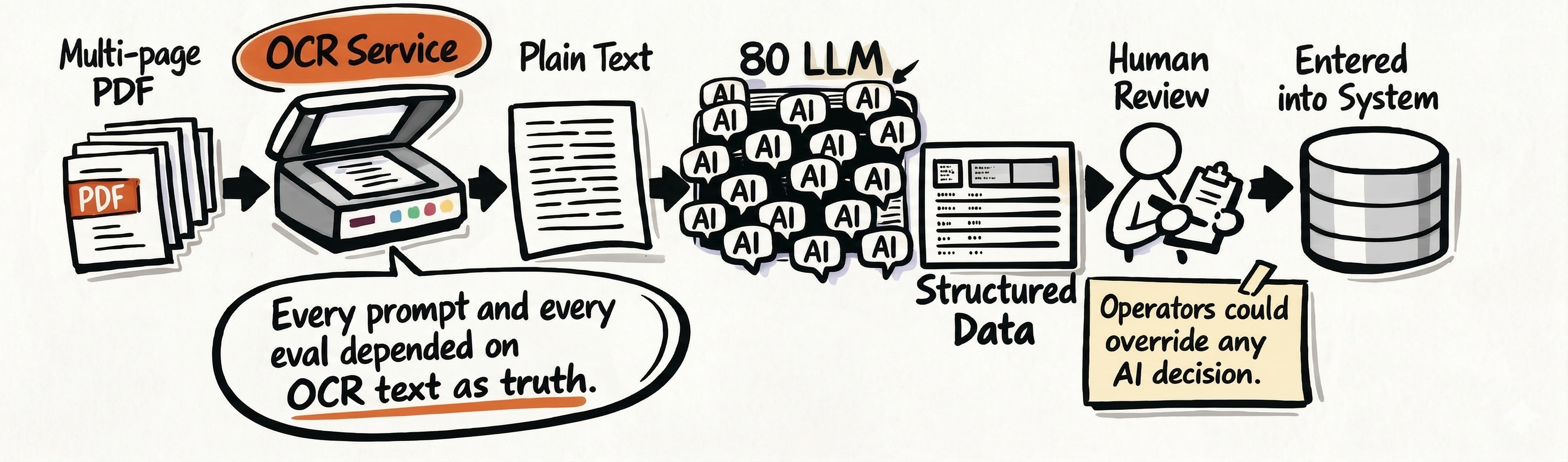

Contracts OCR conversion

Before any extraction happened, each multi-page PDF went through an OCR service that converted it to plain text. The entire downstream pipeline — every prompt, every eval — treated that text as ground truth.

Once the OCR’d text went through the pipeline’s LLM prompts, it became a structured proposal that a human operator would review and enter into the system. The operator could override any AI decision.

No evals, no guardrails

The pipeline shipped without acceptance criteria, automated tests, auditability, or guardrails. There was no way to verify correctness, no way to trace decisions, and no definition of what “good enough” looked like.

Worse, the pipeline corrupted existing data. For some contracts, figures like base rent were already in the system. The UI didn’t surface those existing values alongside the AI extractions in a reasonable way, so operators had to navigate a laborious review process. It was easy to accidentally override correct data that was already there with an incorrect AI extraction.

After the original team’s initial build from March to August, my engagement began in October/November to course correct the project.

Measuring error rates

Before: error rates were invisible.

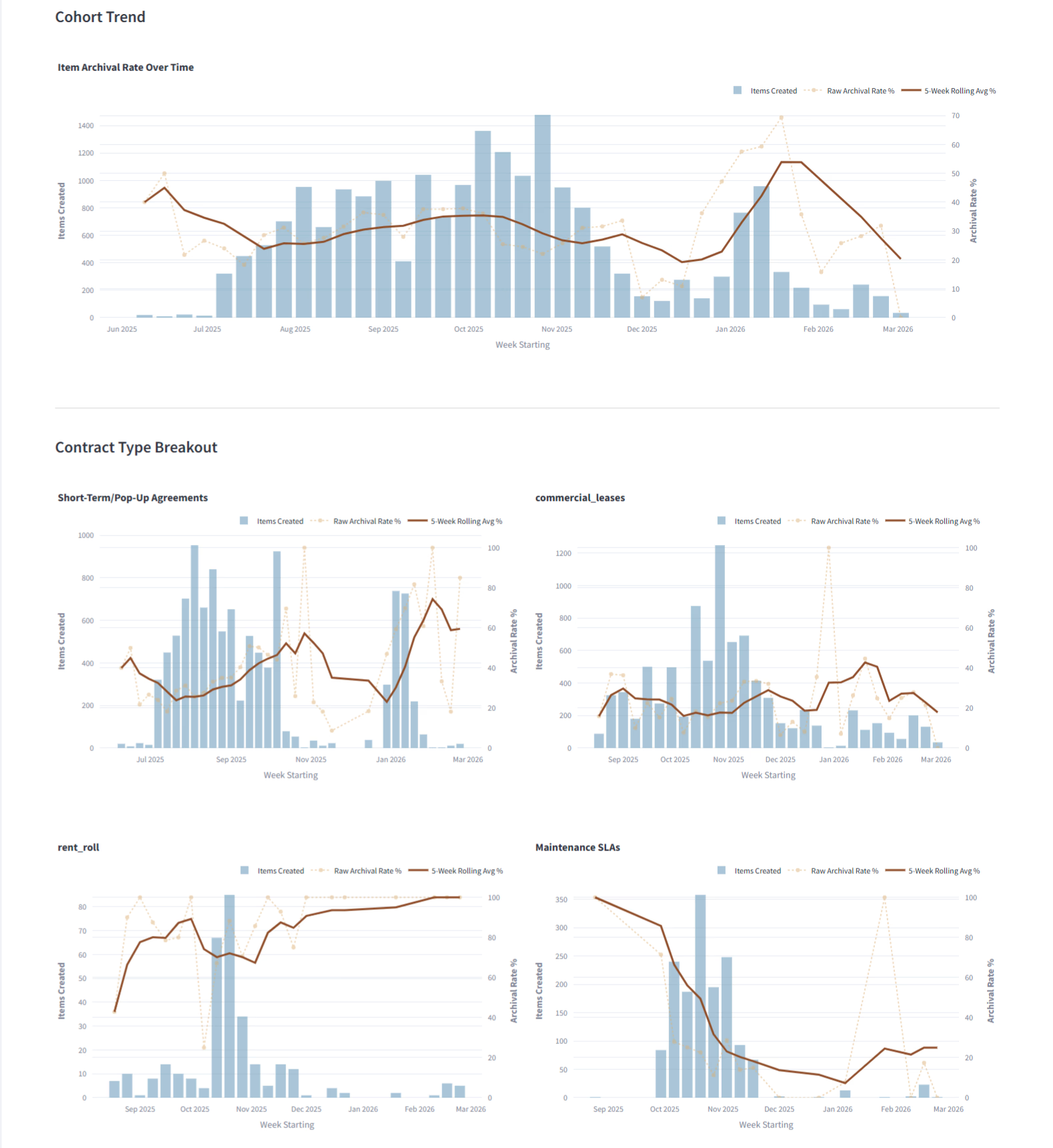

The archival rate

The first thing I measured was the most visible problem: how many documents operators were throwing away.

From June through September 2025, 8,902 top-level PDFs entered the pipeline and 2,848 were eventually archived — a 32.0% archival rate. But that headline number masked huge differences by document type.

| Subtype | Jun–Sep 2025 | Oct 2025–Jan 2026 |

|---|---|---|

| Short-term/pop-up agreements | 33.4% (2,374 / 7,112) | 54.8% (1,861 / 3,393) |

| Commercial leases (individual) | 24.9% (432 / 1,737) | 23.3% (1,524 / 6,542) |

| Rent rolls | 78.8% (41 / 52) | 79.6% (199 / 250) |

| Maintenance SLAs | — | 25.1% (373 / 1,489) |

Rent rolls were catastrophic from the start — nearly 80% archival in both periods. Individual commercial leases stayed stable around 24%. The overall pipeline didn’t jump uniformly to 70-80%. The pain was concentrated in specific workflows.

For a system built to replace a team of 30 humans, those subtype-level rates are the real alarm bell.

The failure wasn’t evenly distributed so I had to ruthlessly prioritize with leadership what the dev team would be focusing on.

When we looked at archive reasons, the dominant pattern was not model hallucination. It was operational friction:

- Missing deduplication: In October and January, operators discarded over 3,000 processed documents with notes like “Already in system” or “duplicate”. The system was spending time and API compute extracting data that the company already had.

- Wrong tool for the job: The pipeline choked on rent rolls. Operators discarded the vast majority of those with manual notes like “big list (rent roll)” or “error, huge list weird source”.

What this data doesn’t show is why each document was archived. A duplicate and a crash both count the same. The operator notes were the only signal on cause, and those were free-text — inconsistent and unstructured. We knew which workflows were failing. We didn’t yet know the mix of causes.

From now on I’ll focus on commercial leases because it was the clearest slice of the system, but the same structural problems showed up everywhere else.

The human overrides rate

The archival rate told us the pipeline was failing to process documents. The next question was whether the documents it did process were accurate. That required a different kind of measurement.

Because the pipeline handled dozens of distinct deal types and schemas, the extraction surface was massive. A single error rate across the whole pipeline would be meaningless — base rent extraction on a commercial lease has nothing in common with liability threshold extraction on a security firm SLA. Any useful measurement had to be segmented by contract type, deal type, and individual attribute.

The company reacted to anecdotal reports from operators using the app UI. Nobody could quantify whether automation was better or worse than the manual process it replaced.

I introduced dashboards to monitor error rates using a rolling, rank-based batched approach. Because contracts arrived in bursts rather than a steady stream, we grouped them by count rather than by time. We tuned the batch sizes to the volume of the document type—batches of 100 for commercial leases, and 50 for vendor SLAs—which normalized the data and prevented arbitrary spikes in the metrics.

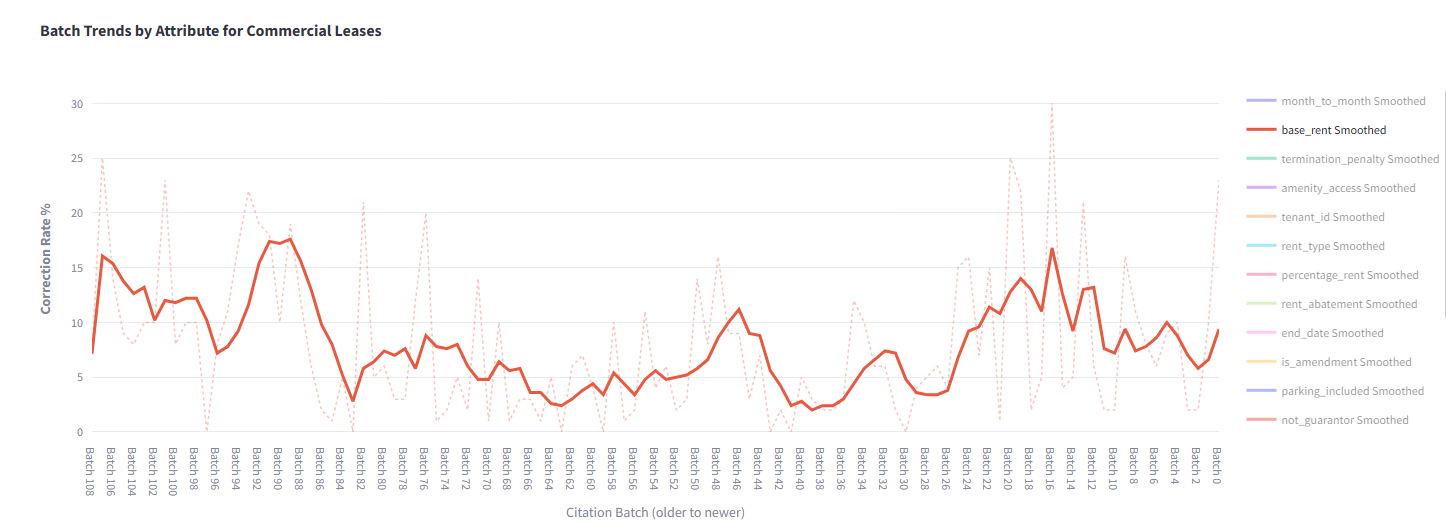

Here’s what the correction rate looked like for base_rent on commercial leases — 10,870 extracted values across 109 batches of 100:

The per-batch rate swings from 0% to 30% between adjacent batches. The rolling 5-batch average smooths that out but still oscillates between roughly 3% and 17% — no clean improvement trend across the full dataset. The middle of the dataset (batches 60–40) is the calmest stretch, with the rolling average sitting at 3–6%. Both ends are noisier.

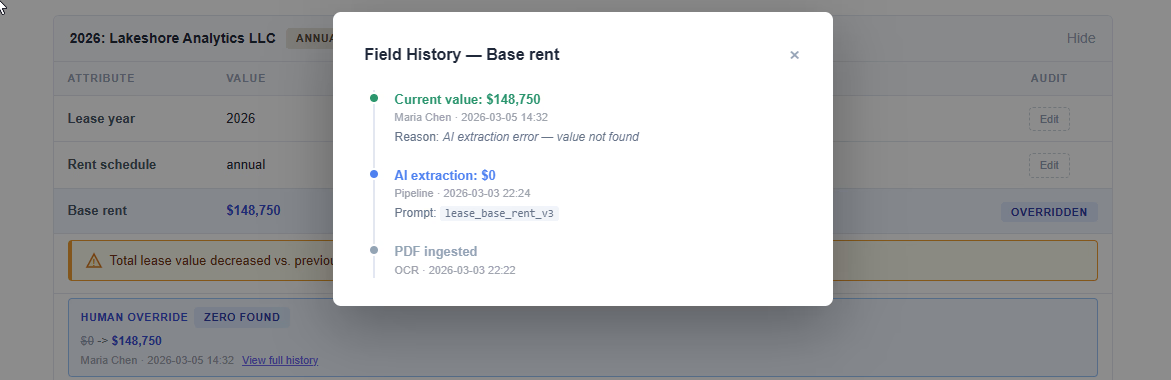

Two things this chart makes visible. First, individual batch rates are meaningless without smoothing — anecdotal reports of “it’s getting worse” or “it’s fine now” could both be true on any given week. Second, the correction rate alone can’t distinguish genuine extraction errors from stale-source overrides (where the AI got the right value from the lease, but the system already had a more current number from an updated addendum). That ambiguity is part of why later I’ll talk about Annotating human corrections.

Human In The Loop audit trail and cutting dead weight

Before: human corrections were invisible.

When a human overrode an AI decision, the original AI value was lost. The only way to detect an override was an updated_at field different from created_at on the record (that’s how the previous errors were calculated).

We introduced auditing on human interventions. Operators could now see what the previous value was. Managers and engineers could finally identify error patterns in AI classifications.

That solved the visibility problem for overrides. A separate signal (product usage) showed that some existing features weren’t contributing to quality at all, starting with the pipeline’s built-in QA.

Replacing AI QA with deterministic warnings

The pipeline included an AI-powered QA step meant to be the safety net. After extraction, it would send the database representation of the contract back to the AI alongside the PDF text. Operators were meant to read through the AI feedback and verify the import before it went live.

It didn’t work. The LLM feedback was generic and rarely actionable… it surfaced something useful maybe 2 times out of 10.

The core issue was scope. Feeding a multi-page lease and a full JSON object into a single comparison LLM prompt produced brittle, inconsistent results.

If a safety net fails 80% of the time, users stop looking at it. Dashboards showed operators ignored the AI QA entirely. I removed it. Nobody missed it.

I made a deliberate product decision: swap a cheap but misleading LLM step for free deterministic IF statements.

We knew from our data scientist’s work that about 12% of financial values entered through the pipeline were later corrected. We analyzed those corrections to find patterns, then turned those patterns into UX warnings that fired before data entered the system. For commercial leases, we flagged:

- Any decrease in total lease value compared to the previous tenant’s agreement

- Same property: >10% increase in total lease value compared to the previous term

- New property class: >20% deviation from the regional average

These weren’t AI. They were the same sanity checks a human reviewer would apply mentally, now surfaced directly in the workflow. Operators could ignore the warnings. Human judgment still took precedence. But every override was audited, so patterns of ignored warnings became visible.

The AI QA step tried to review everything and helped with very little. These deterministic checks focused on a few high-risk cases and caught most of what mattered.

Cutting the confidence score

The prompts returned a confidence_level (0–100) alongside each extraction. In theory, low-confidence values would receive more scrutiny.

In practice, the scores didn’t correlate with actual errors. Human corrections occurred at similar rates across both high and low confidence outputs. Operators learned to ignore the signal.

That was enough to conclude the signal was misleading. We removed it.

Moving hardcoded prompts to an AI Gateway

Before: a massive bottleneck to iteration.

The prompt architecture for commercial leases had a structural problem. One prompt template lived in the AI gateway (Portkey). The application called it 11 times — once per lease field — injecting field-specific instructions that made a single shared prompt behave like 11 different tasks.

The real problem was that no prompt could be changed safely for a single attribute. Because 11 different data fields shared one prompt, the blast radius for a change was 100%. If a product manager wanted to improve the accuracy of base_rent, the engineering team couldn’t do it without risking regressions in the 10 other fields.

In practice, a few lines of field-specific logic were embedded inside ~80 lines of shared extraction rules. We weren’t controlling 11 prompts—we were trying to steer one massive prompt in 11 different directions.

Here’s a trimmed version of the shared wrapper. The three ← injected by app variables were the only parts the application controlled — everything else was shared across all 11 calls:

You are a meticulous contract-analysis engine.

All facts MUST come from the supplied contract_text only.

############## INPUT VARIABLES ##############

- contract_text: {{contract_text}}

- property_name: {{property_name}}

- lease_years: {{lease_years}}

- is_rent_roll: {{is_rent_roll}}

- tenant_name: {{tenant_name}}

- attribute_abbrv: {{attribute_abbrv}} ← injected by app

- attribute_full_form: {{attribute_full_form}} ← injected by app

- attribute_description: {{attribute_description}} ← injected by app

############## EXTRACTION RULES ##############

1. Split-page handling (portrait rent rolls)

Rent rolls might be wide tables broken across consecutive pages.

Always virtually join such paired pages before searching.

2. Tenant filtering (is_rent_roll == true)

Locate rows pertaining exactly to tenant_name.

Ignore data for all other tenants.

3. Value determination

Follow the logic in attribute_description to decide the value.

If the description calls for calculations, perform them.

If nothing matches, use the default fallback.

4. Per-year mapping

For every year in lease_years produce a sub-object with keys:

value, citation, confidence_level.

5. Citation rules

[~20 lines of citation formatting instructions]

############## OUTPUT FORMAT ##############

[12 lines of JSON schema and response rules]

############## EXAMPLES ##############

[25 lines of worked examples]

############## QUALITY & SAFETY ##############

[4 lines of validation rules]The app injected three variables: attribute_abbrv (e.g., base_rent), attribute_full_form (e.g., “Base Rent”), and attribute_description (the field-specific extraction rules). Everything else — the extraction rules in sections 1-5, the examples, the output format — was shared and identical for all 11 calls. The field-specific instructions were one variable inside ~80 lines of shared behavior.

In that setup, changes to a single attribute were unreliable because the shared context overpowered the specific instructions.

The fix: one prompt, one field

Each of the 11 attributes got its own complete prompt with a stable ID. We didn’t just move text out of the application; we decoupled the architecture so the product team could iterate, test, and deploy improvements to single features without breaking the rest of the application.

Now when base_rent extraction needs a prompt change, you edit that one prompt, run evals, verify the change achieved its goal, and promote that prompt to production. No risk to the other 10 attributes. And in the logs, each call is identifiable by its prompt ID.

Agentic coding helped speed this multi-week migration to a few days. The work was mechanical — moving text from the app backend into the AI gateway — but there were 68 prompts to migrate across the whole pipeline. I explicitly required no changes to prompt content during migration, since any variation could impact error rates.

This architectural cleanup wasn’t just about clean code—it was the prerequisite for finally running the evals that had been missing since day one. Until we could isolate a prompt, evaluating it was going to add extra complexity.

Splitting uber prompts

The contract-level details call — extracting six fields in a single shot — had the highest override rates. The model was doing too much at once. It couldn’t reliably extract all six fields in a single pass, so we split it into focused individual prompts, one per field.

The tradeoff is more API calls per document. The gain is focused context for each field, which would improve extraction accuracy, a pattern I’ve successfully used before to increase recall on job classifiers.

Once every field had its own call, we linked each LLM call to the ingested contract ID. That gave us a per-contract AI timeline, a trace of all the calls a document went through.

Just like the hardcoded prompt migration, splitting this uber prompt was a necessary structural fix to enable product iteration. We couldn’t measure or fix what the model was doing wrong when it was trying to juggle six things at once.

Backfilling evals

Because the system was originally shipped without evals, we had no baseline to measure against. Now that there was clarity on the prompts — each attribute isolated in its own Portkey call, identifiable in logs — we could start backfilling evals.

Part of this involved domain experts assembling a diverse set of contract documents to stress the pipeline. The mandate was not to change the prompts but to get some numbers on current accuracy.

The eval itself was a Python script with a series of guidelines. Each prompt that extracted an individual attribute had to be evaluated against that document set.

Building that document set surfaced the rent rolls scenario causing the massive archival spike. For multi-year contracts, a property manager would often send a rent roll instead of the full lease to update the financials. The pipeline was built to handle these rent rolls but the execution was flawed. It relied on an LLM to parse a massive tabular document listing units, tenants, and monthly rents for an entire building. Faced with hundreds of rows, the LLM would hallucinate, or it would just process a handful of suites out of 200. Operators were forced to archive and reprocess those.

The rent rolls were just massive lists of units and dollars. They weren’t standardized—some had comma separators, some had the tenant’s personal name sometimes a business name, some had the currency sign before the figure—but the task was highly structured.

A deterministic approach turned out to be the right solution. We built a pattern-matching Rent Roll Uploader that handled these sheets natively without LLM involvement. We stopped forcing the LLM to do a calculator’s job.

The OCR blind spot

The evals measured whether the extraction prompts correctly pulled values from the OCR’d text. They did not measure whether the OCR’d text was correct in the first place.

If a commercial lease PDF showed a base rent of $150,000 and the OCR service dropped a zero, the extraction prompt would receive “15,000” as input, faithfully return “15,000,” and the eval would score it as correct because it matched the source text. The error happened upstream, invisible to everything downstream.

This is a known limitation we didn’t address. The evals assume OCR output is ground truth. In practice, this held true for most documents except the ones with handwriting corrections.

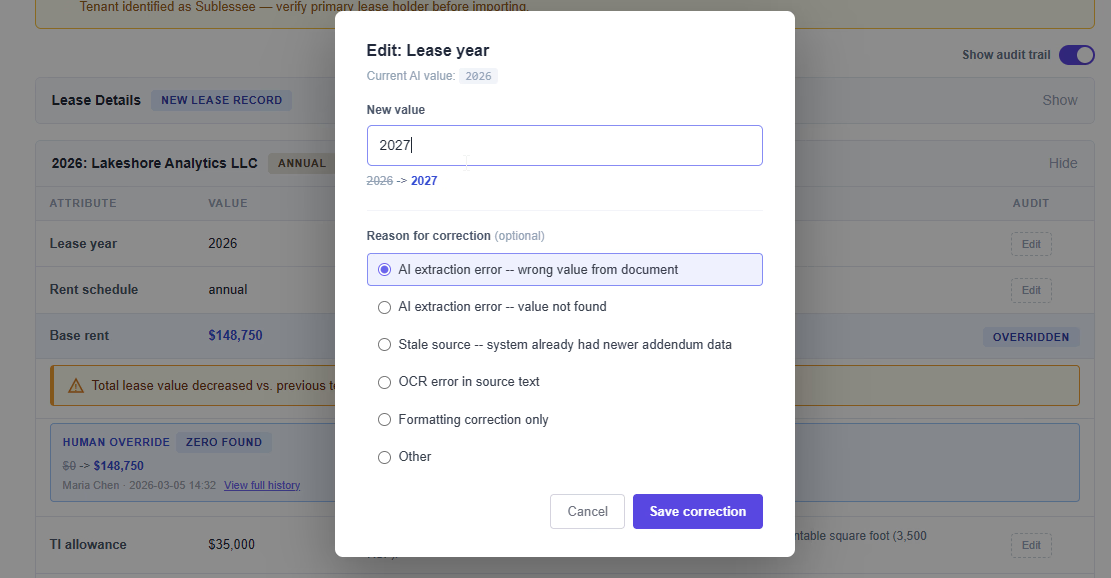

Annotating human corrections

The human-in-the-loop interventions were changing AI extractions. But without context on the nature of each correction, the data was hard to act on.

We added versioning on all fields, storing the full JSON representation of each record before and after every edit. This let us see not just that a field was overridden, but what the before and after values were.

For base_rent, 300 corrections broke down like this:

- 127 zero → positive: The prompt returned “not found” when a rent value existed. These are misses. The prompt failed to locate the value in the document. Could be unusual language, values split across pages, or extraction rules that didn’t cover the format.

- 127 positive → smaller positive: The AI extracted a number, a human corrected it downward. This is the ambiguous bucket. The AI might have grabbed the gross lease value instead of base rent, or combined figures it shouldn’t have. Or this could be the stale-source problem: the lease says $150k but the system already had a more current $132k negotiated in a later addendum.

- 33 positive → larger positive: The AI underextracted. Maybe it caught a monthly figure but didn’t multiply by 12, or found one year’s rent when a later escalation year was higher.

- 9 positive → zero: The AI returned a value that shouldn’t exist. Rarest category, 9 out of 300.

- 4 formatting only: Same numeric value, different formatting (e.g., 195000 → 195,000). We excluded these out of our error metrics since the data was technically accurate.

Without knowing whether the human corrected because the AI was wrong or because the system already had a more current value, you can’t tell how many are real extraction errors.

That’s why we added a reason field on corrections. At the time of writing, the categorized data wasn’t collected yet — operators had just started annotating. But the correction breakdown above shows why it matters: the same 12% correction rate could mean very different things depending on what’s driving it.

Once corrections are categorized, error rates become actionable. That’s the next step — separating real extraction failures from stale-source overrides.

What we ended up with

The error dashboard gave some understanding of the current precision and a way to prioritize future improvements. The audit trail made correction patterns visible. The prompt migration landed incrementally over two months and it became easier to track their flow via the AI gateway and easier to deploy changes. Application code became simpler once we removed the ineffective features. The eval suite gave us a baseline for what the current prompts could and couldn’t do.

What this cost

The pipeline was initially built over 6 months starting in March 2025. It went live without evals, without auditing, and without a definition of “good enough”. A long QA phase followed where edge cases were discovered and backfilled.

I can’t tell you how much the interventions improved accuracy, because there was no baseline. That’s not an unsatisfying gap in the story — it is the story. When you ship a system that touches financial contract data without measuring its error rate, you lose the ability to prove it got better.

What I can tell you is what changed structurally.

Before, the team reacted to anecdotal complaints. Now they have per-attribute correction rates segmented by contract type, rolling batch trends that show whether a prompt is improving or degrading, an audit trail that captures what the AI said before a human changed it, and annotations that distinguish genuine extraction errors from stale-source mismatches.

The system went from flying blind to having instruments.

While this post focused on base rent extraction, the same fixes — measurement, isolation, and deterministic safeguards — applied across all contract types in the pipeline.

The moral of this story is that AI features deserve the same product discipline as any other feature. It’s easy to skip evals, auditing, and success metrics when the technology feels like magic. Most teams I’ve seen building with LLMs have shipped without at least one of those. The ones that course correct early save themselves months of backfilling.

The fix wasn’t a fancier model. The fix was applying basic product operations (dashboards, audit trails, decoupling architecture, and deterministic fallbacks) to an AI feature. The boring stuff that should have been there from the start.