In short: A legacy two-prompt classifier had solid precision but weak recall, missing roughly one-third of cybersecurity jobs and forcing a human operator into hours of daily QA.

The fix was not prompt tweaking. We rebuilt the flow as an ensemble: heuristics for obvious matches, ML for broad coverage, and a focused LLM stage for nuanced decisions.

The result was a major recall jump (from the high 60s to the mid 90s) while keeping API costs flat.

Note: I’ve kept the high-level logic intact, but I’ve obfuscated specific internal metrics and insights to respect project confidentiality.

The Problem and V1’s Failure

The portal aggregates about 1,000 public sector job listings a day from across EU institutions and member state agencies. Only 3–5% of them matter to the portal: roles within the Cybersecurity divisions of those institutions. These feed a specialized vertical — a dedicated section where CERT analysts, NIS2 compliance officers, and security operations professionals browse openings and claim their professional profiles.

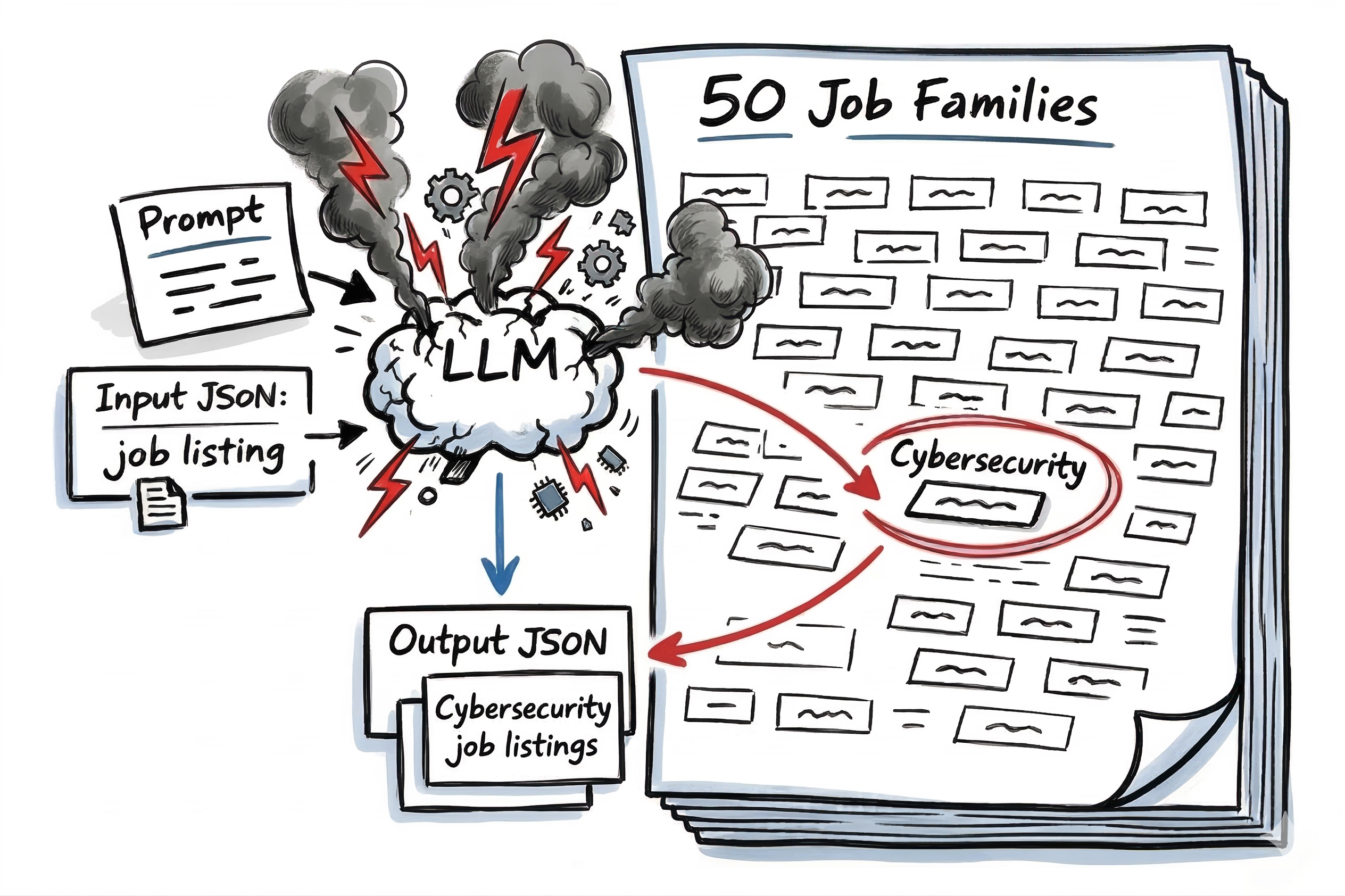

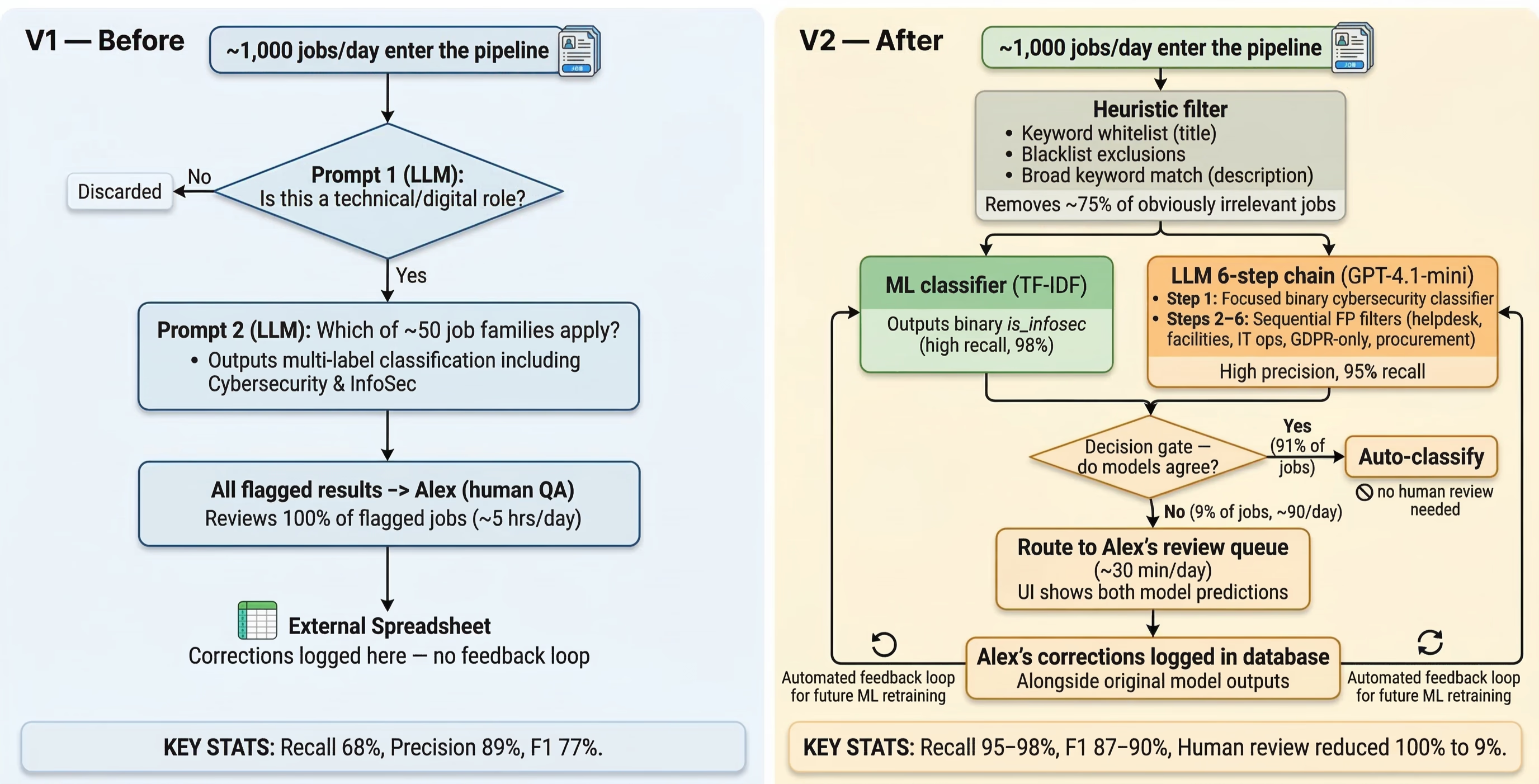

In V1, every single job was routed through a two-prompt chain via Portkey (an AI gateway). Prompt 1 asked: Is this a technical or digital role? If it was, the job was passed to Prompt 2, which asked: Which of the ~50 job families apply? Because the company didn’t fully trust the output, a single human operator — Alex — reviewed the flagged results. He was spending roughly 5 hours a day on QA.

The V1 metrics tell the story:

- Precision: 89% — when the system said “cybersecurity,” it was mostly right.

- Recall: 68% — but it was missing nearly a third of all actual cybersecurity jobs.

- F1: 77% — far from stellar considering Alex was still reviewing every flagged job AND hunting for the ones the system missed.

I was brought in as the product lead, but this project needed more than product direction. The classification pipeline was a technical problem being treated as a prompt-tweaking exercise. I leaned on my engineering background and set the technical direction.

The real cost wasn’t Alex checking the positive matches — it was correcting hundreds of false negatives every single week. We were generating 5× more false negatives than false positives. And the system wasn’t learning from its mistakes: all of Alex’s manual interventions were logged in a disconnected spreadsheet outside the app.

Getting alignment on what was actually broken

Part of the problem was that there were zero evals on V1. Nobody had actually measured the system’s precision, recall, or error distribution — so when things felt wrong, the company’s approach was prompt tweaks. Each tweak had the tendency to introduce downstream breakages but there was no way to know because nothing was being measured.

Leadership knew the system had issues, but without data they had the problem completely backward — they assumed we were dealing with a false positive problem.

Their instinct was the classic AI anti-pattern: just tweak the prompt.

It took showing the CEO the weekly FN/FP breakdown to prove that our actual problem was false negatives.

Raw Numbers

| Month | Total Jobs | Missed (FN) | Incorrectly Flagged (FP) | Correctly Caught (TP) |

|---|---|---|---|---|

| Jan 2025 | 56,573 | 693 | 171 | 1,060 |

| Feb 2025 | 53,118 | 613 | 139 | 907 |

| Mar 2025 | 49,218 | 596 | 146 | 1,144 |

Classification Metrics

- Recall

- Precision

- F1 Score

| Month | Recall | Precision | F1 Score |

|---|---|---|---|

| Jan 2025 | 60.47% | 86.11% | 71.09% |

| Feb 2025 | 59.67% | 86.71% | 70.71% |

| Mar 2025 | 65.75% | 88.67% | 75.51% |

Once we clarified what the problem was — and that prompt band-aids couldn’t fix a structural problem — we had the mandate to rethink the approach.

The context: A Kitchen-Sink Legacy Prompt

To understand why V1 missed nearly a third of cybersecurity jobs I had to look at the context around that prompt.

It was originally built for a different mission: categorizing every possible public administration role across EU institutions into ~50 job families. When the business pivoted to caring exclusively about Cybersecurity they kept using the same prompt.

Three things were killing recall:

- The attention trap. The LLM was holding ~50 job families in its context window. When a job like “Digital Operational Resilience Analyst” in a national CERT came through, the model would spot the giant list, confidently flag “IT & Digital Services,” and move on. Its attention was too diluted to aggressively hunt for the secondary cybersecurity connection.

- Highly defensive rules. The prompt’s rules and examples were laser-focused on what wasn’t a cybersecurity job (helpdesk technicians, desktop support, IT operations coordinators). This explains the high 89% precision — the prompt was terrified of false positives and guarded against them aggressively. But that same defensiveness meant ambiguous cases defaulted to “not cybersecurity”.

- Multi-label ambiguity. The prompt said “you can assign more than one category” but didn’t force exhaustive evaluation. If the model assigned “IT & Digital Services” and simply forgot to append “Cybersecurity & InfoSec,” we logged a silent false negative.

The V1 prompt wasn’t broken — it was perfectly optimized to answer the wrong business question. If we wanted to catch every cybersecurity job, we had to stop asking the LLM to be a generalist and replace the multi-label approach with a binary classifier: is this cybersecurity, yes or no?

Here’s a redacted version of the actual V1 prompt. Notice how cybersecurity is buried as one item in a list of ~50:

## MISSION

I want to classify public administration job listings into further job families. You can assign more than one.

## INPUT CONTEXT

### Background Info

These job listings are all from the EU public sector. On any European government job portal, all listings are initially classified as Policy, Administration, or Executive. [...]

## RULES

### Boundaries and constraints

The limited set of job families is below. No other job families are allowed. Do not invent a new job family.

- Policy & Legislation

- Legal Affairs

- IT & Digital Services

- Cybersecurity & InfoSec

[... 40+ other categories removed for brevity ...]

- IT Helpdesk & Desktop Support

- Other Administrative Positions

### Specific subgoals and rules

You can pick more than one job family for a listing. However, there are some exceptions:

1. If a listing states that it will be working with the Cybersecurity & InfoSec division, then add Cybersecurity & InfoSec...

2. If a listing has a department or unit of Cybersecurity, CERT, or Security Operations, then categorize it as Cybersecurity & InfoSec.

3. If a listing describes general helpdesk support, desktop troubleshooting, or basic network administration but not security operations, then categorize it as IT Helpdesk & Desktop Support and not Cybersecurity & InfoSec.

4. Any listing that is mostly about general IT support and not NIS2, DORA, CERT operations, or security incident response should be categorized as IT Helpdesk & Desktop Support and not Cybersecurity & InfoSec.

5. If it mentions NIS2, DORA, ENISA, or CERT/CSIRT operations, then it belongs in Cybersecurity & InfoSec.

Examples of job listing titles that are IT Helpdesk & Desktop Support but not Cybersecurity & InfoSec:

- Helpdesk Technician

- IT Support Specialist

- Desktop Support Analyst

[... JSON Output Instructions Omitted ...]The prompt tells the story on its own. Cybersecurity is one line item buried in a ~50-family list. The only specific rules about cybersecurity are defensive — three out of five are about what isn’t cybersecurity. And the examples are all false positive guards (helpdesk technicians, IT operations coordinators). Nothing in this prompt encourages the model to aggressively hunt for cybersecurity jobs.

Defining Success

Before writing any new code, we had to define what “better” actually meant.

The core problem wasn’t just that the V1 model was missing jobs — it was that Alex, our domain expert, was chained to a QA queue reviewing every single flagged listing. Even if we built a model with fewer errors, if Alex still had to review 100% of the volume, we had failed.

Our goals:

- Reduce human review volume. Alex shouldn’t be reviewing every job — only the ones the system can’t confidently classify on its own.

- Layer cost to complexity. Keyword filters for the obvious cases, ML for the bulk, LLM for the nuanced decisions, and Alex only for the jobs where the models disagree.

The initial hypothesis: We assumed that if we trained a lightweight ML model to output an is_infosec boolean alongside a confidence_level, we could beat the legacy LLM’s classification. More importantly, we hoped that the confidence score would give us a mathematical threshold to determine exactly when a human needed to intervene.

SPOILER ALERT: this hypothesis turned out to be half right — we got the ML classifier, but the HITL trigger ended up being model disagreement rather than confidence thresholds.

The target metrics. The new system had to decisively beat our Jan–April 2025 baselines:

- Precision: 89.41%

- Recall: 68.74% — this was the bleeding neck. We needed this in the 90s.

- F1 Score: 77.72%

Diagnosing the Error Patterns: False Negatives and False Positives

I pulled a balanced test dataset of 200 historically misclassified jobs — 50 false positives, 50 false negatives, alongside 100 correct classifications — and categorized the errors. Leadership had initially pushed for “validation” against 10 records.

That’s not a test set — it’s noise.

To put their mind at ease, I ran a cost estimate for the full eval against our LLM pipeline. A few dollars. That was all it took to get the green light.

The false negative problem

We knew we were missing nearly a third of all cybersecurity jobs, but seeing the actual titles was the first piece of the puzzle. The V1 model was entirely missing three core buckets of cybersecurity staff.

- Junior and trainee security roles: titles like “NIS2 Compliance Implementation Officer,” “Junior CERT Incident Handler – Network Intrusion Response,” or “Part-Time Security Operations Analyst – EU-CERT”.

- EU-regulation-specific roles: titles like “Cybersecurity Awareness and Outreach Coordinator” or “Digital Operational Resilience Analyst (DORA)”.

- Operational security specialists: eIDAS Trust Services Auditors, EU Restricted Network Administrators, and similar roles.

This was the ~50-family attention problem from the legacy prompt in action. A “NIS2 Compliance Implementation Officer” was getting misclassified as a generic “Legal Affairs” or “Policy & Legislation” role because the LLM spotted the broader administrative bucket first and moved on, entirely missing the cybersecurity connection.

The false positive problem

Though false positives were less frequent (remember, V1 had 89% precision), they were just as predictable. The LLM was getting tripped up by jobs that sounded like security, or were organizationally adjacent to security teams, but had nothing to do with core cybersecurity operations.

General IT roles like “IT Operations Coordinator – Digital Services Portal” or “Helpdesk Services Supervisor – Citizen Portal”. Facilities-adjacent roles like “Data Centre Facilities Coordinator”. Building security staff — “Building Access Systems Technician” working in a secured government building. IT training roles like “IT Training Specialist” that happened to mention cybersecurity awareness in the job description.

A snapshot of the data was moved from Postgresql to Elasticsearch for faster insights.

This Elasticsearch query identifies “False Positive” keywords by finding job title terms that are statistically overrepresented in human-corrected data compared to the system’s current automated classifications.

GET alex_jobs/_search

{

"size": 0,

"query": {

"bool": {

"must": [

{ "term": { "human_override_is_infosec": false }}

]

}

},

"aggs": {

"unique_fp_words": {

"significant_text": {

"field": "title",

"filter_duplicate_text": true,

"background_filter": {

"bool": {

"should": [

{

"bool": {

"must": [

{ "term": { "is_infosec": true }},

{ "bool": { "must_not": { "exists": { "field": "human_override_is_infosec" }}}}

]

}

}

],

"minimum_should_match": 1

}

}

}

}

}

}

The key insight

This analysis gave me a better understanding of what was going on. AI errors in this domain were not random — they followed certain patterns.

We didn’t just need a smarter model — and we definitely didn’t need to blindly upgrade to the latest one. I opposed leadership’s push to jump to the next GPT without any evals. Once we introduced evals, that upgrade (still using the V1 strategy) showed no significant improvement.

We needed to re-architect the system acknowledging those specific failure modes. To fix the 68% recall, we had to cast a wide net to catch the subtle roles like NIS2 compliance officers and CERT incident handlers.

But casting a wider net inherently creates more false positives. To prevent our precision from tanking, that wide net had to be followed by multi-step LLM prompts to weed out the helpdesk technicians and IT operations coordinators. The goal of the filters wasn’t to beat V1’s 89% precision — it was to hold the line and keep precision stable while recall climbed into the 90s.

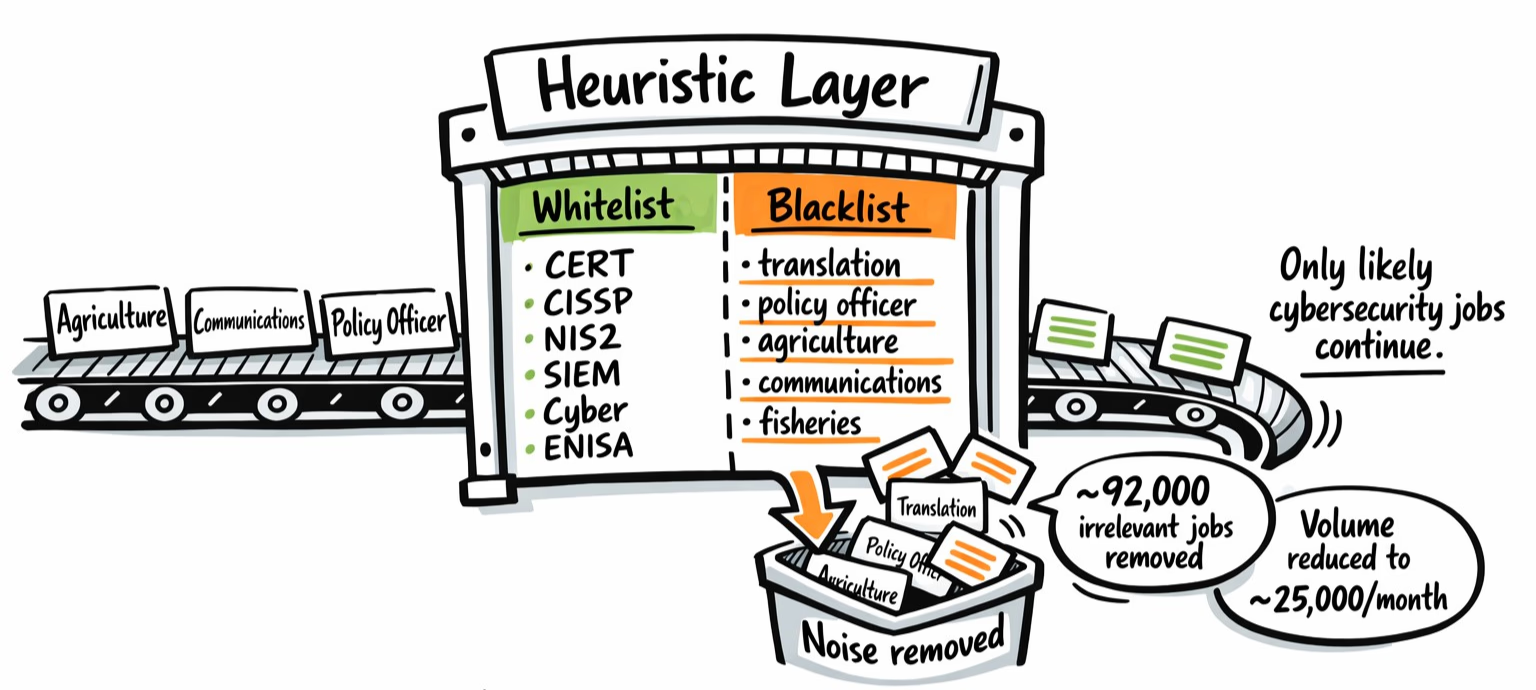

The Heuristic Layer: Reducing LLM Classification Volume by 75%

Processing 1,000 jobs a day through a multi-step LLM pipeline is slow and expensive, especially to find out a listing is for an “Agricultural Policy Officer – Common Fisheries Programme”. Can we just send fewer jobs to the classifier?

We tested a whitelist heuristic on 90 days of historical data (~125,000 jobs) to see if keyword filters could safely isolate the cybersecurity jobs before the LLM step. We compared a broad keyword set (cyber, infosec, security, NIS2, ENISA) against a tight keyword set (37 specific cybersecurity terms like CISSP, SIEM, Penetration Testing).

Here is how the experiments played out:

| Strategy | Target | Missed InfoSec Jobs (FN) | Jobs Sent to LLM (FP) | Verdict |

|---|---|---|---|---|

| Broad | Title Only | 4,204 | 828 | Unacceptable recall. |

| Tight | Title Only | 662 | 2,947 | Misses ~7 valid jobs daily. |

| Broad | Title + Description | 86 | ~20,000 | Description match is too greedy. |

| Tight | Title + Description | 2 | ~85,000 | Classification costs too high. |

The data showed a clear tradeoff. Title-only filters missed ambiguous roles (like “Digital Operational Resilience Analyst” within a national CERT), while description filters pulled in any generic government IT listing that mentioned security protocols, data protection compliance, or access control procedures.

Final heuristic logic

We combined the approaches and introduced a blacklist to filter out obvious non-security roles. To pass into the LLM pipeline, a job had to meet this criteria:

- Title contains a tight keyword (e.g.,

CISSP,CERT) AND does not contain a blacklist keyword (translation,policy officer,communications,agriculture,fisheries). - OR, description contains a broad keyword (

cyber,infosec,NIS2,ENISA).

Out of 125,000 jobs, this hybrid heuristic missed only a handful of cybersecurity jobs.

The missed jobs fell into three buckets:

- generic administrative titles with no security signal at all (“Administrative Specialist III,” “Associate Director, Programme Development,” “Coordinator, Facilities Planning”)

- operational roles where specific terms weren’t in our keyword list (“Crisis Response Coordination Officer,” “Digital Resilience Programme Analyst”)

- “Senior Cybersecruity Analyst” that our filter missed because the source data misspelled Cybersecurity. No heuristic survives dirty input, but the volume was so small we didn’t bother fixing it.

On the other side, the filter removed ~92,000 irrelevant jobs, reducing our classification volume from 100% of the database to ~25,000 jobs per month. By dropping the noise before it reached the model, we cut API costs (more on this later) and latency. Only the jobs that survived this filter were passed to the V2 pipeline.

is_infosec:true AND

(title:(*AppSec* OR "Blue Team" OR *CASB* OR *CERT* OR *CISM* OR *CISSP* OR *CISO*

OR *Cryptograph* OR *CTF* OR *Cyber* OR *DLP* OR *DORA* OR *eIDAS* OR *ENISA*

OR *EUCI* OR *Exploit* OR *Forensic* OR *GRC* OR *IAM* OR *IDS* OR *InfoSec*

OR *IPS* OR *Malware* OR *NIS2* OR *OSCP* OR *PAM* OR *PenTest* OR *Penetration*

OR *PKI* OR "Red Team" OR *Ransomware* OR *SAST* OR *DAST* OR *SecOps*

OR "Security Engineer*" OR *SIEM* OR *SOAR* OR "SOC Analyst" OR "Threat Intel*"

OR *Vulnerabilit* OR *vCISO* OR "Zero Trust")

AND NOT title:(*translation* OR *interpreter* OR *policy\ officer* OR *agriculture*

OR *fisheries* OR *customs* OR *budget\ officer* OR *accountant* OR *legal\ secretary*)

OR description_md:(*CISSP* OR *InfoSec* OR *Cyber* OR *NIS2* OR *ENISA* OR *DORA* OR *EUCI*))V2: A TF-IDF and LLM Ensemble Classifier

With six months of human-corrected data, we had a labeled dataset of 78,662 jobs. The data wasn’t clean — Alex’s corrections lived in a CSV spreadsheet outside the app, so we had to download both the spreadsheet and the database records and reconcile them into an aggregate CSV tracking every HITL intervention. Not glamorous, but it gave us ground truth.

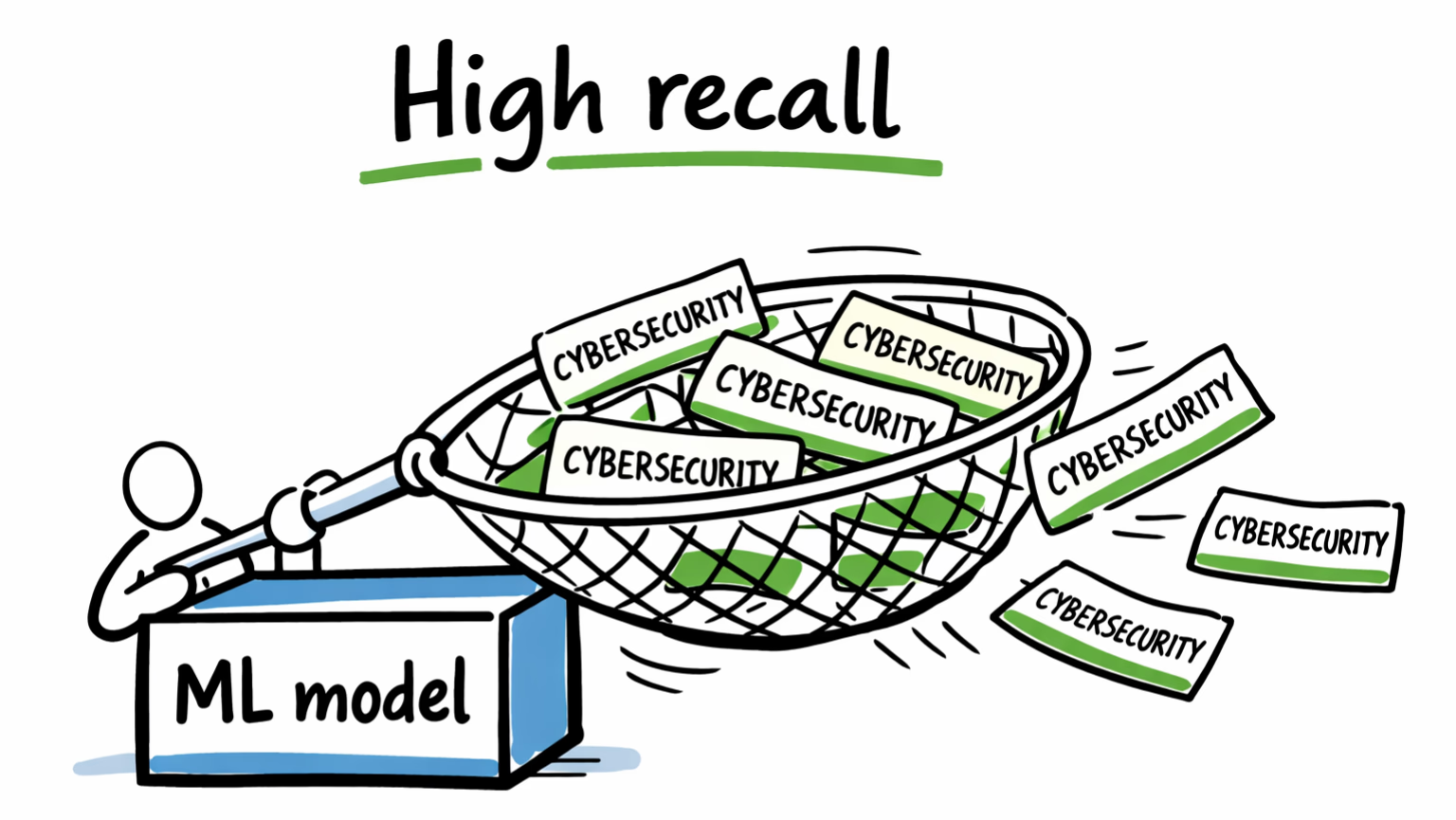

We used Alex’s manual corrections to train a TF-IDF classifier. Training took under four minutes on a dev laptop. On our initial 20% holdout test set (15,733 jobs), the model output looked like this:

- Precision: 99.1%

- Recall: 97.7%

- F1 Score: 98.4%

Test sets rarely survive contact with production data. When we ran this model against the full unseen dataset (279,000 jobs), the metrics normalized:

- Precision: 80%

- Recall: 98%

- F1: 88%

The ML model traded precision for recall. This was the opposite of V1’s problem — and exactly what we wanted from one half of the ensemble.

The LLM multi-step chain

While the ML model acted as our high-recall net, we redesigned the LLM prompt to handle precision. Instead of a single multi-label prompt, we built a sequential pipeline separating the logic of finding cybersecurity jobs from the logic of excluding false positives.

- Step 1: Primary classification (look for explicit cybersecurity and security operations signals).

- Steps 2–6: Sequential exception filters. These run only if Step 1 returns true. They explicitly reverse known false positives (general IT/helpdesk, facilities & building security, IT operations & service desk, data protection (GDPR-only, not cybersecurity), and IT asset/procurement management).

The multi step filter prompts

Here’s a simplified version of the 6 steps prompts for V2.

Primary Classifier:

## TASK

Determine if an EU public sector job listing belongs to a Cybersecurity & InfoSec function.

Mark as `"infosec_department": true` only if:

- The job is explicitly assigned to "Cybersecurity," "Information Security," "CERT," "Security Operations," or a close variant;

- Or there is **no department listed**, and the listing includes strong cybersecurity signals such as `NIS2`, `DORA`, `ENISA`, `CSIRT`, `EUCI`, SOC analyst, security engineer, penetration tester, threat intelligence analyst, vulnerability management, SIEM operations, security architect, cyber risk analyst, digital forensics, or security operations centre;

- If the job is clearly assigned to a **non-cybersecurity department** such as IT Helpdesk & Desktop Support, Data Protection & Privacy, or Property & Building Management, return `false` even if keywords appear.

## INPUT

{{job_listing}}

## OUTPUT

Return only this JSON:

{

"infosec_department": true | false,

}Subsequent filters

Run only if the job was deemed cybersecurity from the primary prompt.

Filter 1 — General IT & Helpdesk:

## TASK

If the job is for IT helpdesk, desktop support, general systems administration, or IT service desk (e.g. helpdesk technician, desktop support analyst, IT operations coordinator), it is not part of core cybersecurity operations.

## INPUT

{{job_listing}}

## OUTPUT

{

"infosec_department": false,

}Filter 2 — Facilities & Building Security:

## TASK

If the job involves physical security, building access systems, facilities management, or data centre facilities upkeep—even near secure government infrastructure—it is not Cybersecurity & InfoSec staff.

## INPUT

{{job_listing}}

## OUTPUT

{

"infosec_department": false

}Filter 3 — IT Operations & Service Desk:

## TASK

If the job is for IT operations management, basic network administration, or IT service management—even if it supports infrastructure used by security teams—it belongs outside Cybersecurity & InfoSec.

## INPUT

{{job_listing}}

## OUTPUT

{

"infosec_department": false,

}Filter 4 — Data Protection (GDPR-only):

## TASK

If the job is in Data Protection, GDPR compliance, or privacy policy—even with security-adjacent language like "data breach notification" or "impact assessment"—and lacks operational cybersecurity duties (SOC, CERT, incident response, vulnerability management), it is not Cybersecurity & InfoSec.

## INPUT

{{job_listing}}

## OUTPUT

{

"infosec_department": false

}Filter 5 — IT Asset & Procurement Management:

## TASK

If the job focuses on IT asset tracking, software licensing, IT procurement, or digital inventory management but lacks cybersecurity assignment, it's not Cybersecurity & InfoSec.

## INPUT

{{job_listing}}

## OUTPUT

{

"infosec_department": false

}We ran evals on this pipeline on a balanced 200-job test set using three model tiers:

- GPT-4.1: Precision 91%, Recall 91%, F1 91%

- GPT-4.1-mini: Precision 91%, Recall 91%, F1 91% (~30% cheaper)

- GPT-4.1-nano: Precision 97%, Recall 67% — the smaller model couldn’t reliably apply the nuanced exception logic in steps 2–6, so it over-filtered.

We deployed GPT-4.1-mini — same F1 as the full model at a fraction of the cost.

Running evals was a critical step to gain confidence before deploying this LLM chain to production. And a stepping stone to future prompt iterations.

The ensemble architecture

So we had two models with distinct strengths. The TF-IDF model had 98% recall. The LLM had higher precision.

Instead of choosing one, we designed an ensemble: run both models on every job that survived the heuristic filter. If they agree, auto-classify. If they disagree, route the job to a human.

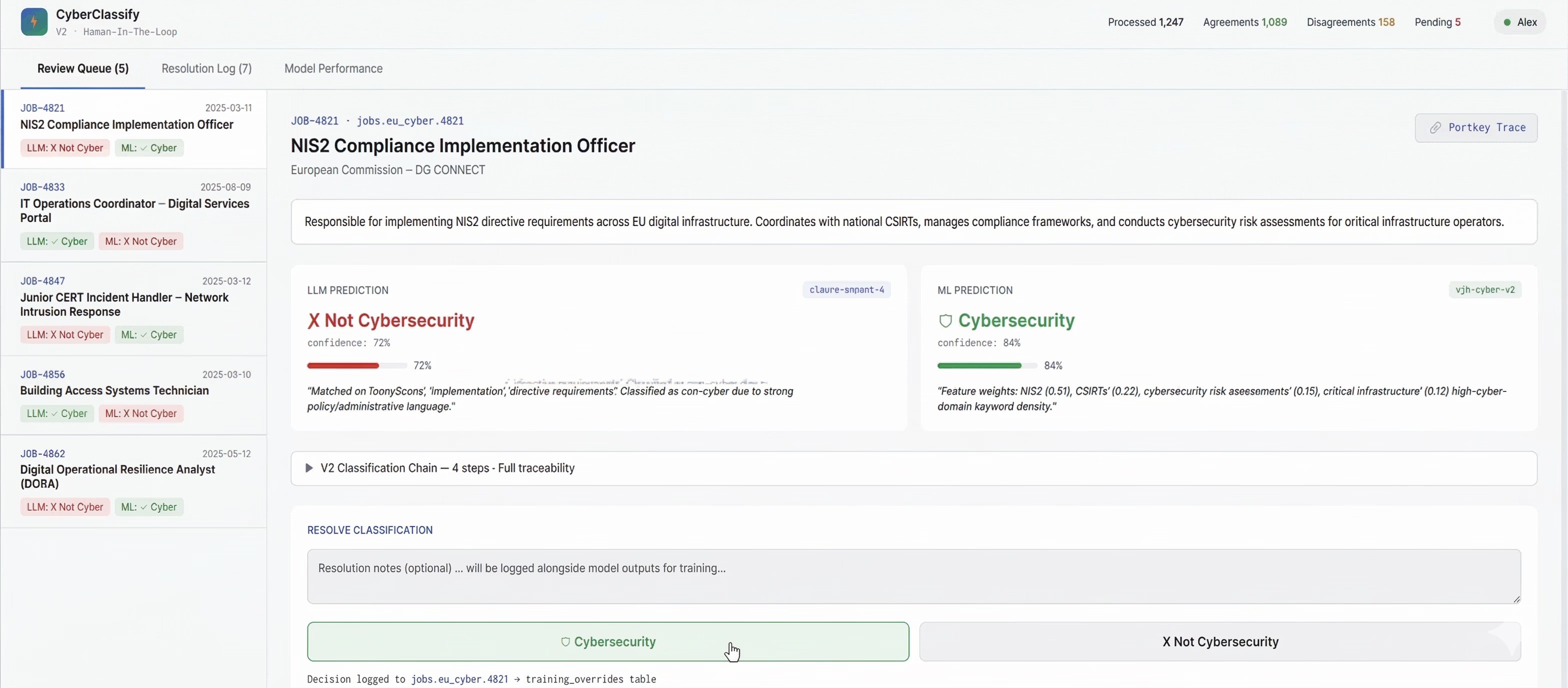

Human-in-the-Loop: From 1,000 Reviews/Day to 90

In V1, Alex reviewed every flagged job, spending 5 hours a day on QA. His corrections were logged in an external spreadsheet, meaning the system never learned from its mistakes.

We didn’t flip the switch overnight. For the first two weeks, Alex continued using the V1 workflow while we deployed V2 in production alongside it. We ran daily calls with Alex to compare: V2 would flag jobs, and Alex would confirm whether the system correctly tagged what he’d have manually corrected under V1. After two weeks of shadow deployment, we made the full cutover.

In V2, we redesigned the operational workflow around the ensemble’s disagreement rate. These are the numbers from the first two biweekly production runs (Aug–Sep 2025) — early enough to validate the design, but not the full picture. The next section covers five months of drift monitoring that shows how these numbers evolved.

- LLM standalone: Precision 86%, Recall 95%, F1 90%

- ML standalone: Precision 78%, Recall 98%, F1 87%

When the models agreed (91% of jobs), baseline accuracy was >91%. We automated these completely, bypassing human review.

When they disagreed (9% of jobs), we routed the job to Alex’s queue. The UI displayed both model predictions. In these disagreement cases, the ML model was right 56% of the time, and the LLM was right 44%.

When Alex resolves a disagreement, his final decision is logged in the database alongside the original model outputs, creating an automated dataset for future training.

We also added Portkey traces linking every LLM call back to the job record in the database. In V1, when a job was misclassified, there was no way to find the original AI call — you couldn’t see what the model was given, what it returned. Debugging a false positive meant guessing. In V2, every classification is traceable: the input, the model’s response at each step of the chain, and Alex’s final override if one exists.

Our original hypothesis was that ML confidence scores would tell us when to involve a human. In practice, disagreement was the cleaner signal. By treating model disagreement as a feature rather than a failure, we isolated the exact jobs that required human judgment.

We hit our target metrics while reducing Alex’s daily review volume from 1,000 jobs to roughly 90. Reviewing 100% of 1,000 jobs a day is a full-time QA role. Reviewing the 9% of disagreements is a 30-minute daily check.

Monitoring Model Drift in Production

Once you automate classification, you need to know if accuracy is drifting over time — ideally before someone complains.

I architected a set of Metabase dashboards to track the pipeline’s health on a biweekly cadence. The data team could track four specific signals:

1. Override rate

Out of all listings evaluated by both models, what percentage did a human override? A dropping override rate means the automation is handling more volume. A rising rate indicates a shift in the incoming data.

To ensure we measure the full pipeline and not partial runs, we strictly filter to jobs where both models completed.

2. Disagreement rate

Every 14 days, we measure what percentage of classified jobs resulted in a model disagreement. A rising disagreement rate means something changed — either in the incoming data or in model behavior — and Alex’s review queue is growing. A falling rate means the models are converging, though that’s only good news if accuracy is holding steady alongside it.

We partition into clean 14-day windows using generate_series in PostgreSQL, and break down the direction of disagreement: LLM says yes / ML says no, vs. ML says yes / LLM says no. If one direction starts dominating, it tells us exactly which model is drifting.

3. Disagreement resolution

When the models disagree and a human resolves the tie, which model was right? We track resolution outcomes to generate actionable metrics (pct_sided_with_llm and pct_sided_with_ml). If humans consistently side with the LLM over the ML, that generates a specific, targeted retraining signal for the ML pipeline.

4. Model performance with rolling averages

We track biweekly precision, recall, and F1 for each model independently. To smooth out weekly noise, I ensured we used a biweekly rolling average.

The most important design decision here was how to define ground truth for an automated system:

- If a human reviewed it, their override is the ground truth.

- If the models agree and no human intervened, treat that agreement as ground truth.

- Any unresolved disagreements were excluded from ground truth until a human intervened.

This is a pragmatic compromise. We use the best available signal: human judgment first, model consensus second, and we’d ignore unresolved disagreements.

What the data actually showed

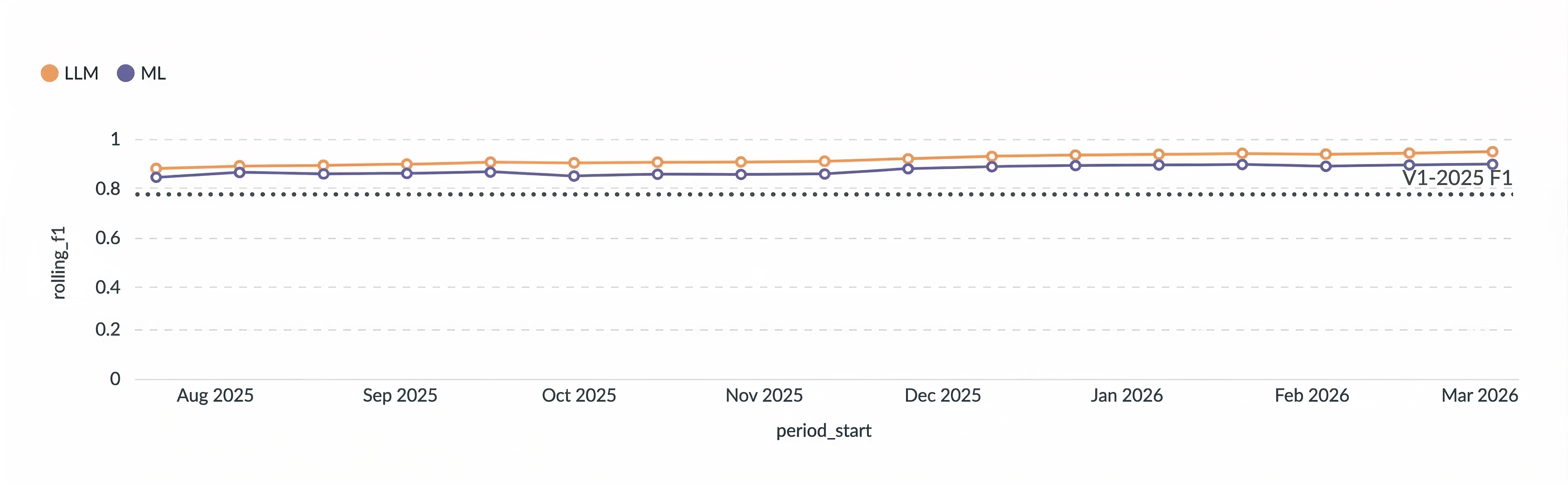

Over six months of biweekly tracking (Jul 2025 – Jan 2026), the trend was clear: the system improved over time. LLM F1 stabilized from 0.88 in late July to 0.95 by mid-December. The rolling F1 followed the same trajectory — 0.88 to 0.94.

But the dashboards also caught a dip. In the late September / early October period, LLM precision dropped from 0.91 to 0.86, and ML precision fell from 0.79 to 0.71. We noticed the dip but it self-corrected by the next period. The monitoring was working as designed.

The ML model’s behavior was remarkably consistent throughout: recall never dropped below 0.97, while its weakness was always precision. This confirmed the ensemble design — the ML catches everything, the LLM handles precision, and the monitoring confirms both are playing their assigned roles.

What We Learned

Here’s where we started and where we ended up:

| Metric | V1 (LLM only) | V2 (Heuristic + ML + LLM Ensemble) |

|---|---|---|

| Precision | 89% | 86% (LLM) / 78% (ML) |

| Recall | 68% | 95% (LLM) / 98% (ML) |

| F1 | 77% | 90% (LLM) / 87% (ML) |

| Human review volume | 1,000 jobs/day (100%) | ~90 jobs/day (9%) |

| Alex’s daily QA time | ~5 hours | ~30 minutes |

| Feedback loop | None (spreadsheet) | Automated (corrections logged in DB) |

| LLM cost | ~$1/day (all jobs) | ~$1/day (post-heuristic filter only) |

Quick note on cost

The cost on this step of the pipeline was actually the same. We went from running 2 prompts on all jobs to running 6 prompts on 25% of the jobs. The obvious saving was Alex’s extra 4 hours.

Between August 2025 and March 2026 this pipeline used 444M tokens and its AI provider cost was 190$.

Takeaways

Four takeaways from the project:

Start with the data you already have. Six months of human corrections turned out to be the most valuable asset. The ML model trained on Alex’s judgment calls outperformed the V1 LLM on recall by 30+ points. If you have a human in the loop, you already have a labeled dataset — you just might need to clean it up.

Heuristics aren’t beneath you. A keyword filter that took an afternoon to build reduced our classification volume by 75% before any model touched the data. Less API cost, less latency, and a cleaner signal for the downstream models.

Disagreement is a feature. Instead of picking one model, we used the gap between two models as a signal. The 9% of jobs where ML and LLM disagree is exactly where human attention has the highest ROI. Our original hypothesis was that confidence scores would be the HITL trigger — in practice, a simpler binary signal worked better.

Measure the system, not just the model. Biweekly dashboards tracking override rates, disagreement patterns, and which model the human sides with turned “is this working?” from a gut feeling into a number. The monitoring is what makes it safe to keep reducing human involvement over time — and it’s what caught the September precision dip.

What’s next

The system still has a known blind spot: when both models agree on the wrong answer, nothing gets flagged for human review. The next iteration would be periodic random-sample audits — pulling a small batch of auto-classified jobs for Alex to spot-check, even when the models agree. That’s the difference between a system that works today and one you can trust to keep working.

But the biggest change wasn’t technical — it was in the team’s approach to delivering AI features. I put together a separate post going through that: 8 Standards for Shipping Production LLM Features.